Last updated 4/29/2020

by Laura Maher & Adrian Pelliccia

Laura Maher is a Relationship Manager at Siegel Family Endowment

Adrian Pelliccia is the Head of Communications at Siegel Family Endowment

In a time when a clear, objective, and trustworthy media and information landscape is more important than ever, the COVID-19 pandemic is magnifying many troubling and increasingly urgent trends in mis- and disinformation. Over the past few weeks, inconsistent information about the spread of the virus, potential remedies, and best practices for self-protection have contributed to an atmosphere of confusion and fear around the world. The reliability of our information is now a matter of life and death.

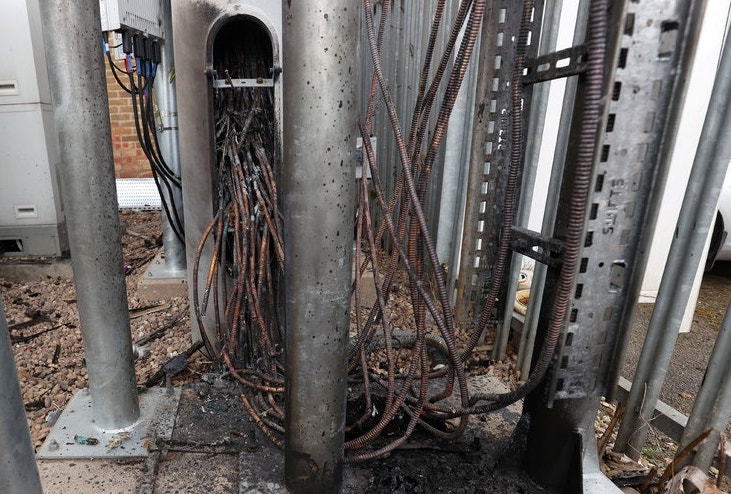

Many longstanding concerns shared by researchers and policymakers in this space are entering mainstream conversation, and are playing out across the largest social media platforms, like Facebook, Twitter, and YouTube. In some especially striking cases, misinformation that spreads in forums on social channels has started to affect real world behaviors: for instance, an unsubstantiated conspiracy theory that links new 5G networks to the spread of coronavirus has resulted in individuals destroying cell towers throughout the United Kingdom. The pandemic is amplifying existing fears among a wide segment of the population, and is creating conditions for baseless misinformation to spread and lead to real world impacts that can be felt in real time.

From the New York Times: Wires of a telecom tower that was damaged by fire in Birmingham this month. Credit: Carl Recine/Reuters

Combating misinformation and ensuring information integrity will only continue to grow more urgent as the pandemic continues. Over the past few years, SFE has developed strong partnerships with several organizations focused on misinformation prevention and mitigation initiatives. Now, addressing these problems is more urgent than ever, and our grantees are springing into action to develop impactful responses to the pandemic and to strengthen the information ecosystem surrounding it. We’re compiling an overview of what these grantees are doing to stop misinformation around COVID-19, and will be keeping the below list up to date as their efforts evolve.

Here’s how some of our misinformation-focused grantees are responding to the crisis:

Developing reliable reference materials in more than 100 languages

Wikimedia, the parent organization to Wikipedia, is a global movement whose mission is to bring free educational content to the world. Right now, the community of contributors, editors, and moderators that keep Wikimedia’s resources live and dynamic are maintaining a robust information ecosystem populated with articles that are comprehensive, objective, and up-to-date. “When things happen, the world looks our way,” said Katherine Maher, CEO of the Wikimedia Foundation, in a recent post on the organization’s response to COVID-19. “It’s our mission, and responsibility, to keep this critical resource available in moments of crisis.”

Fulfilling that mission has become more urgent than ever, as Wikipedia experiences record levels of traffic. Notably, their COVID-19 article is backed up by more than 975 medical references and has been translated into more than 100 languages to increase accessibility. In March alone, the article was viewed more than 180 million times, which represents a 3,600 percent increase in views since January. The coronavirus articles on English Wikipedia are part of WikiProject Medicine, a collection of some 35,000 articles that are ferociously watched over by nearly 150 editors with backgrounds and professional expertise in medicine and public health. Their team is directing resources towards maintaining site continuity, providing accurate information in multiple languages, and supporting editors who are producing content that’s directly relevant to the pandemic.

Wikipedia is also addressing misinformation about coronavirus and COVID-19 head on, and their article dedicated to debunking misinformation about the pandemic is currently available in 30 languages.

Hosting virtual network-building talks and events to share knowledge and resources about the impact of COVID-19

Data and Society, a research institute focusing on the social implications of data, technology, and automation, has a history of working on media manipulation and disinformation. They have moved much of their network-building programming online, and are gathering ideas for future virtual convenings about the impact of COVID on their existing research areas and other timely subjects, including:

- Medicine, biomedical surveillance, patient privacy vs. public health

- Impact on platformed gig workers, labor, and precarity (e.g. warehouse workers, sick leave)

- Content moderation and platform governance

- Misinformation and disinformation and their impact on information quality

- Privacy, community security, and surveillance during the pandemic

- Technology infrastructures and the pandemic

- Impact of pandemic on the 2020 census

- Evolving AI standards and policies in the crisis

A roster of upcoming events can be accessed on their website. We’ll update this post with more details as they’re finalized.

Publishing guidelines and tools to cut misinformation off at the source

The Mozilla Foundation has worked since 2003 to fuel a healthy internet by supporting fellowships, convening Internet Leaders, producing critical research, and advocacy. “Topics related to trustworthy AI and data sharing feel more urgent than ever,” said Mark Surman, Mozilla’s Executive Director. “We have a chance to evolve data sharing and AI norms that are responsible and respectful — yet without a sustained focus on why it’s important and how it’s possible, we will likely head in the opposite direction.” Mozilla’s research fellows, who are funded by SFE, are pursuing a range of projects and developing resources that are specifically tailored to COVID-19-related misinformation efforts. Current projects include:

- A beta-version of an app designed to analyze and visualize data in order to determine the origin points of disinformation on COVID-19

- A contribution to an open letter to the UK’s NHS about ethical best practices for new technologies being considered to suppress the spread of the coronavirus

- A piece by Mark Surman, Mozilla’s Executive Director, on ‘Privacy, pandemics and the AI era‘ that explores how to set new norms for trustworthy data sharing and the AI that runs on that underlying data

Conducting research on how the pandemic and politics intersect on social media

The NYU Center for Social Media and Politics (CSMaP) examines the production, flow, and impact of social media content in the political sphere, and support research that uses social media data to study politics. CSMaP is also continuing to advance research on the best methods for expediting and scaling crowdsourced fact checking, and for slowing the spread of misinformation online. In a recent article for The Washington Post, CSMaP’s leadership spoke to the accelerated urgency of this issue, stating “the rapidly changing information environment surrounding covid-19 has the potential to magnify the vulnerabilities revealed by previous studies of online misinformation.” In response, the team is considering extending an existing research project on susceptibility to misinformation, which is becoming increasingly relevant as the pandemic starts to impact the 2020 election more and more substantially. To learn more about supporting this research effort, contact CSMaP Executive Director Zeve Sanderson at zns202 [at] nyu.edu.

The uncertainty of the moment is bringing many longstanding concerns from the information integrity space into mainstream conversation – and now, the stakes of presenting reliable and trustworthy information can mean the difference between life and death. We’re hopeful that this ongoing work will create a more reliable information ecosystem that advances public safety in the long term.